Quick decision toggles

Use this quick triage before reading the full guide. Then validate with a 30-day pilot.

Choose Texta if...

- You want one workflow from visibility signal to assigned action.

- You run weekly operating reviews and need fast execution rhythm.

- You want source diagnostics, mention movement, and next-step guidance in the same workspace.

Choose Otterly.ai if...

- AI search monitoring focused on tracking brand and topic visibility in assistant outputs over time.

- Your team is willing to assemble decisions across multiple systems or longer analysis cycles.

- Your near-term priority is strategic reporting alignment more than operator execution speed.

Run a dual pilot if...

- Two or more departments disagree on reporting vs execution priorities.

- You need objective evidence before procurement or migration.

- You want a weighted scorecard built from your own prompts, competitors, and sources.

Texta vs Otterly.ai: Lightweight Monitoring vs Deeper Execution Workflow

Last updated: March 14, 2026

If your team needs straightforward AI visibility monitoring with clear tier limits, Otterly.ai is often a strong starting point. If your team needs a deeper operating workflow that connects visibility movement to intervention planning and execution, Texta is usually the stronger fit.

This page is built for buyers comparing Texta and Otterly.ai. It focuses on practical buying questions: pricing model, functional fit, rollout risk, and team adoption.

TL;DR

- Texta: better for execution-heavy GEO teams that need monitor-to-action continuity.

- Otterly.ai: better for teams seeking simple onboarding and clear prompt-tier packaging.

- Otterly public pricing is explicit ($25 Lite, $160 Standard, $422 Premium at observed snapshot).

- Otterly add-ons (extra prompts and additional engines) materially affect total cost.

Internal links: Texta pricing, all comparisons, start with Texta.

Visual Evidence (Scoped Screenshots)

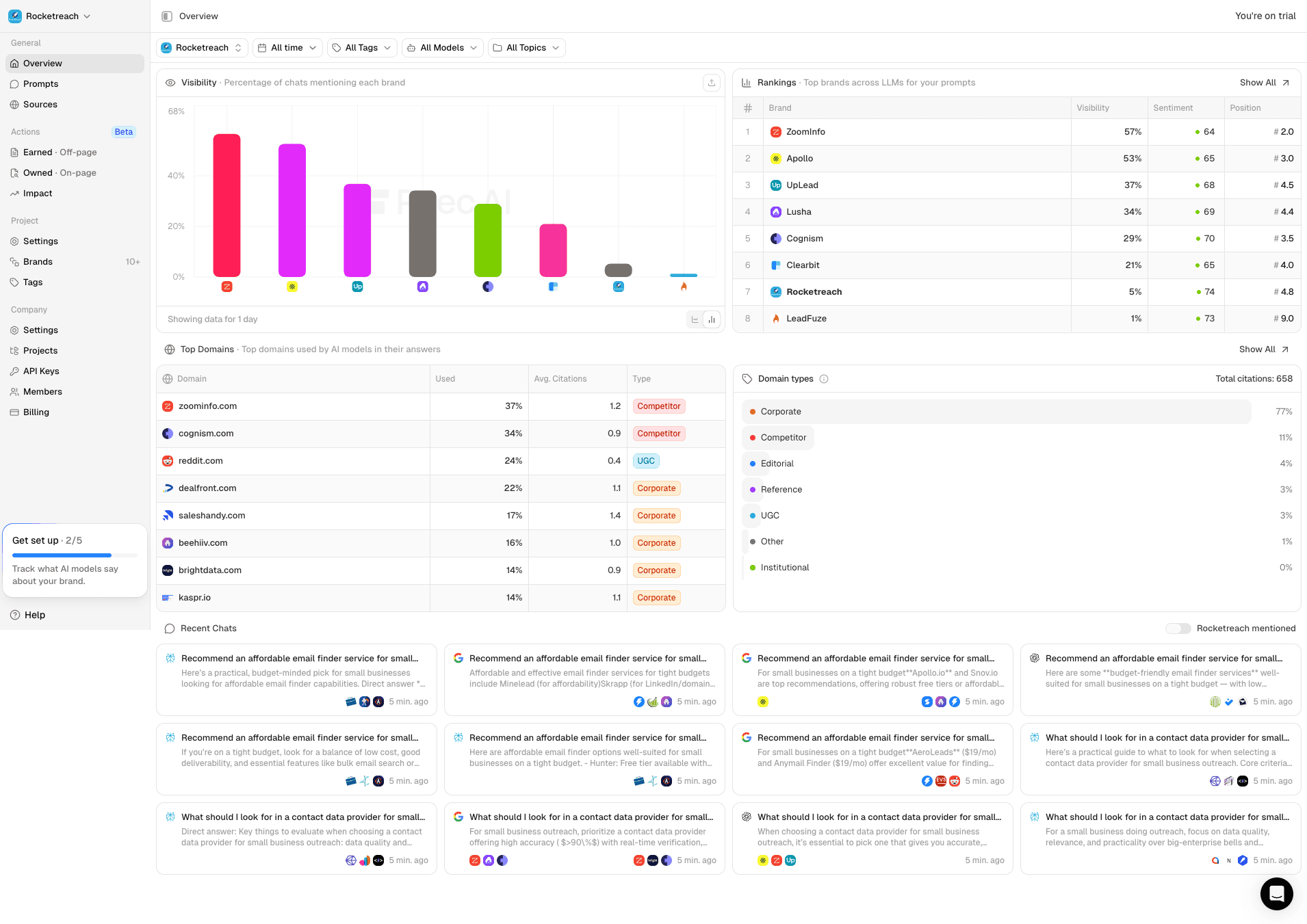

Caption: Texta overview surface used for ongoing monitor -> interpret -> act operations.

Caption: Texta overview surface used for ongoing monitor -> interpret -> act operations.

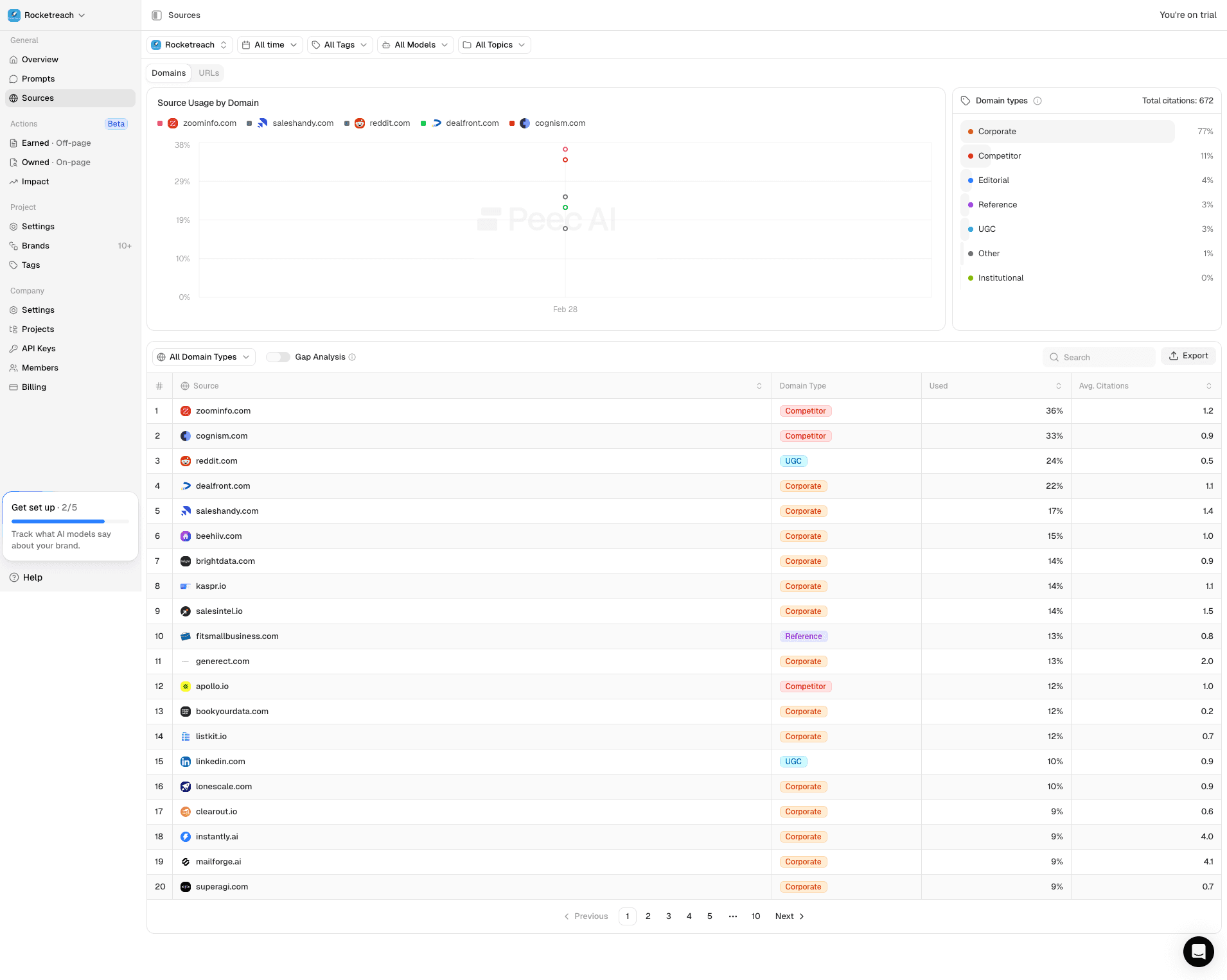

Caption: Texta source/domain diagnostics used to prioritize interventions and measure citation shifts.

Caption: Texta source/domain diagnostics used to prioritize interventions and measure citation shifts.

Caption: Otterly.ai public page snapshot showing positioning and plan framing.

Caption: Otterly.ai public page snapshot showing positioning and plan framing.

Caption: Otterly.ai scoped plan/features block used for side-by-side comparison.

Caption: Otterly.ai scoped plan/features block used for side-by-side comparison.

Scenario Score Chart

Caption: Scenario model for an execution-focused GEO team (weights prioritize actionability and source-level intervention speed).

At-a-Glance Functional Comparison

| Area | Texta | Otterly.ai |

|---|---|---|

| Primary positioning | AI visibility operations and intervention workflow | AI search monitoring and optimization with plan-based prompt tiers |

| Plan structure | Operational workflow value proposition | Lite/Standard/Premium with 15/100/400 prompts |

| Platform coverage | Multi-model monitoring and source analysis | Google AI Overviews, ChatGPT, Perplexity, MS Copilot (+ AI Mode/Gemini add-ons) |

| Diagnostics framing | Source and mention diagnostics linked to next steps | Brand visibility index, domain ranking, link citations analysis, GEO audits |

| Team model | Operators with recurring intervention ownership | Teams needing quick setup and dashboard-first monitoring |

Pricing Snapshot (Public Info, checked March 14, 2026)

| Plan | Otterly.ai | What is included |

|---|---|---|

| Lite | $25/mo | 15 search prompts, daily tracking, core reporting features |

| Standard | $160/mo | 100 search prompts, daily tracking, add 100 prompts for $99 |

| Premium | $422/mo | 400 search prompts, daily tracking, add 100 prompts for $99 |

| Enterprise | Custom | Custom prompts, SSO, onboarding, and custom payment options |

Pricing interpretation notes:

- Otterly pricing includes optional add-ons for prompt volume and additional engines; model total cost with these included.

- Public help docs and pricing page should be cross-checked during procurement due periodic updates.

- If your team scales prompt volume quickly, add-on costs can change plan economics significantly.

Review Signal Snapshot

G2 snapshot: Otterly.ai listed at 4.9/5 (41 reviews). Common positive themes include easy setup and actionable monitoring; some reviewers mention learning curve and cost sensitivity as scale grows.

Who Should Choose Which Tool

Texta is typically better when

- Teams needing deeper action planning from source and mention shifts.

- Organizations where weekly execution throughput is a primary KPI.

- Buyers who want fewer translation steps from signal to owned task.

Otterly.ai is typically better when

- Teams that need fast setup and clear prompt-tier pricing.

- Monitoring-first organizations still building GEO operating maturity.

- Buyers wanting straightforward dashboard visibility before heavier workflow tooling.

Buyer Questions This Page Answers

- How many prompts do we truly need daily vs weekly by market segment?

- Will add-on pricing for extra prompts/engines change our budget materially?

- Do we need monitoring only, or intervention workflow depth now?

- Can our team convert dashboard insights into shipped improvements consistently?

- How much reporting detail do executives require beyond visibility snapshots?

- What ownership model makes this stack sustainable over 2-3 quarters?

30-Day Evaluation Framework

Use the same prompt set, competitors, and reporting cadence in both tools.

| Criterion | Weight | How to score |

|---|---|---|

| Time from signal to assigned action | 25% | Median time from alert to owned task |

| Insight quality for weekly review | 20% | Team can explain what changed and why |

| Source/citation intervention throughput | 20% | Number of completed interventions |

| Reporting readiness | 20% | Time to produce decision-ready weekly update |

| Team adoption confidence | 15% | % of owners using the platform weekly |

Migration Notes

- Pilot core prompt clusters first to validate intervention quality.

- Track cost impact of add-ons early to avoid budget surprises.

- Standardize one weekly review format with action owner and due date.

- Expand scope only after demonstrating measurable visibility and citation improvements.

Related comparisons

Use these internal comparison pages to evaluate adjacent options and keep your research workflow in one place.

| Page | Focus | Link |

|---|---|---|

| Texta vs peec.ai | Practical head-to-head for teams choosing between integrated execution workflow and analytics-first GEO monitoring. | Open page |

| Texta vs Profound | Detailed comparison for organizations balancing operator speed against enterprise reporting and governance requirements. | Open page |

| Texta vs Promptwatch | Practical guide for teams weighing market-facing AI visibility operations against prompt observability priorities. | Open page |

| Texta vs Semrush | Useful for teams balancing classic SEO stack depth against AI-answer visibility execution and action loops. | Open page |

| Texta vs Ahrefs | Decision guide for organizations running both SEO and GEO priorities with limited team bandwidth. | Open page |

| Texta vs AirOps | Clear breakdown for teams choosing between optimization insights and production automation as their first AI investment. | Open page |

| Texta vs AthenaHQ | Built for teams evaluating two AI visibility-focused tools with different execution and reporting priorities. | Open page |

| Texta vs rankshift.ai | Decision framework for teams that need both ranking clarity and faster execution from visibility signals. | Open page |